And what do I have there? I have the exponential series for lambda t. You see- so this is now my formula for e to the A t, is V. This V inverse comes out at the far right. We expect that a V goes out at the far left at the front. V and a V inverse, so I have a 1/2 half lambda squared t squared. Factor V out of the start, and factor V inverse out of the end.Īnd in here I have V times V inverse is I, so that's fine. You remember this A squared, so I'll take that away. And those cancel out to give V lambda squared V inverse, times t squared, and so on. A squared is V lambda V inverse, times V lambda V inverse. So everybody remembers what A squared is. That's right, that's I, plus A t, plus 1/2 A t squared. So I'm doing the good case now, when there are a full set of independent eigenvectors.

I'm now going to use the diagonalization, the eigenvectors, and the eigenvalues for A. And now I can see what is- e to the A t is always identity plus A. If I have n independent eigenvectors, that matrix is invertible. And we can write V inverse because the matrix V has the eigenvectors. And we know that that means, in that case, a is V times lambda times V inverse. So what am I saying? I'm saying that this e to the A t- All right, suppose we have n independent eigenvectors. But if we want to use eigenvalues and eigenvectors to compute e to the A t, because we don't want to add up an infinite series very often, then we would want n independent eigenvectors. So for with repeated eigenvalues and missing eigenvectors, e to the A t is still the correct answer. This exponential, this series, is totally fine whether we have n independent eigenvectors or not. Now, is it better than what we had before, which was using eigenvalues and eigenvectors? It's better in one way. When I put this into the differential equation, it works.

So then if I add a y of 0 in here, that's just a constant vector. So it's A e to the A t, is the derivative of my matrix exponential. And I say it's very much like the one above. And the 3 cancels the 3 and the 6, and leaves 1 over 2 factorial, and so on.Īnd I look at that. t cubed? The derivative of t cubed is 3t squared, so I have a t squared. The derivative of t squared is 2t, so that'll just be a t. I have an A squared, and I have a t squared. The derivative of this is the derivative of- that's a constant.

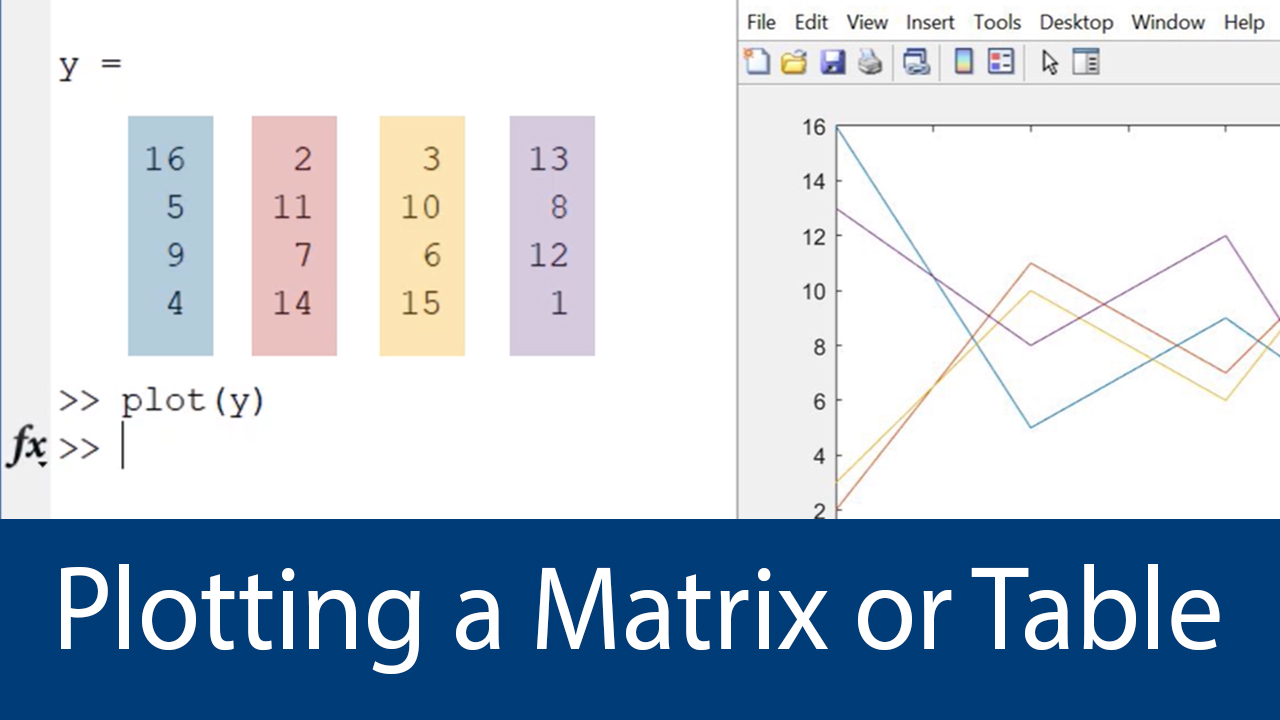

So I want to put that solution into the equation. Now, is that the right answer? We check that by putting it into the differential equation. Everything here, every term, is a matrix. The most important series in mathematics, I think.Īnd it gives us an answer. So the identity, plus A t, plus 1/2 A t squared, plus 1/6 of A t cubed, forever. It should be a perfect match with this one, where this had a number in the exponent and this has a matrix in the exponent. And the solution should be, at time t, e to the A t, times the starting value. Now we have n equations with a matrix A and a vector y. So if we have one equation, small a, then we know the solution is an e to the A t, times the starting value. It's just natural to produce e to the A, or e to the A t. We're still solving systems of differential equations with a matrix A in them.Īnd now I want to create the exponential. The matrix analysis functions det, rcond, hess, and expm also show significant increase in speed on large double-precision arrays.OK. The matrix multiply (X*Y) and matrix power (X^p) operators show significant increase in speed on large double-precision arrays (on order of 10,000 elements). As a general rule, complicated functions speed up more than simple functions. The operation is not memory-bound processing time is not dominated by memory access time. For example, most functions speed up only when the array contains several thousand elements or more. The data size is large enough so that any advantages of concurrent execution outweigh the time required to partition the data and manage separate execution threads. They should require few sequential operations. These sections must be able to execute with little communication between processes. The function performs operations that easily partition into sections that execute concurrently.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed